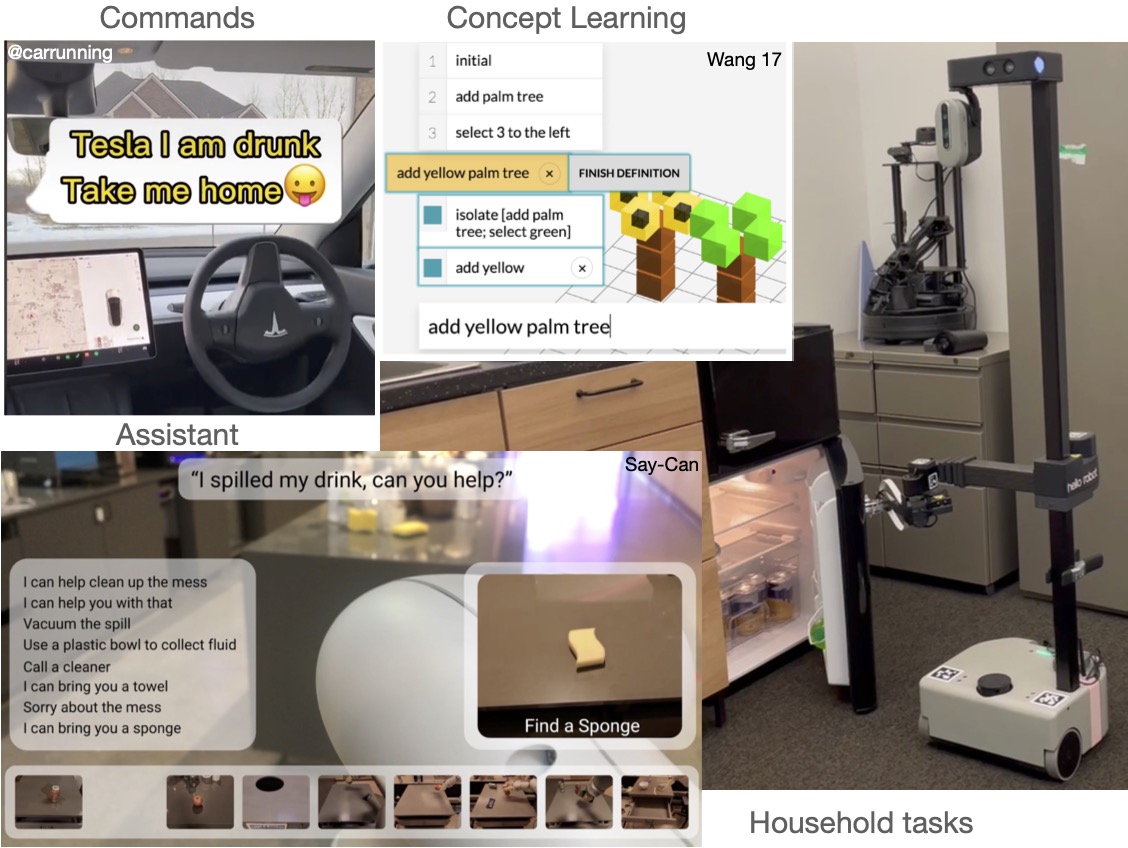

Where is my robot butler?

- Why is everyone using language models?

- Why use learning based methods when control works?

- What's with Foundation models for robotics?

- Will GPT-

4, 5,6? be a robot? 😱

Bring your own robot, let's add language to your research

Projects will be scoped by prior hardware/simulator experience -- but knowledge of Deep Learning + one specialty (NLP/CV/Robotics) is basically required. Send Qs to Yonatan (ybisk@cs).

- LLMs & Foundation Models

- Instruction following & Dialogue

- Task and Motion Planning

- End-Effector & real-valued control

- Semantic Mapping (2D and 3D)

- World Models

- How do you define or evaluate Dialogue?

- Limitations of offline and unimodal pretraining

- How does embodiment shape meaning?

- Discrete vs continuous spaces and representations.

- When is Sim2Real possible? What's about manipulation?

- I only have one brain, do I need more than one model?

- Time & Place: 3:30pm - 4:50pm Tu/Th -- DH 1212

- Canvas (probably)

- Instructor: Yonatan Bisk

- Zoom? Nope

- Readings? The library is your friend

- Slides? No ma'am

Course Schedule

| Tues | Topic | Thurs | Topic |

|---|---|---|---|

| Aug 26 | Dualism | Aug 28 | Symbol Grounding |

| Sep 2 | Before 1990 + Prize | Sep 4 | Defining intelligence |

| Sep 9 | Building Worlds | Sep 11 | Linguistic Structure |

| Sep 16 | Group Pitches | Sep 18 | Perspective & Red team |

| Sep 23 | Space and Time | Sep 25 | Continuous |

| Sep 30 | Mapping & Planning | Oct 2 | Real valued + Sim2Real |

| Oct 7 | States & Tasks | Oct 9 | Mid-term presentations |

| Oct 14 | Fall Break | Oct 16 | Fall Break |

| Oct 21 | Manipulation | Oct 23 | Imagination |

| Oct 28 | VLAs | Oct 30 | Concept Learning |

| Nov 4 | Democracy Day | Nov 6 | Theory of Mind |

| Nov 11 | Project Hours (virtual) | Nov 13 | Project Hours (virtual) |

| Nov 18 | Pragmatics and Dialogue | Nov 20 | Do we need intelligence? |

| Nov 25 | TBD | Nov 27 | Thanksgiving |

| Dec 2 | Project Presentations | Dec 4 | Philosophy Presentations |

Course Structure and Grading

This course is available as both a seminar (6 credits) and project based (12 credits) course.| Assignment | Grades | Schedule | |

|---|---|---|---|

| Definitions | 3*10 (indiv) | Most weeks | |

| Start | Pitches | 5 (group) | 9/18 |

| Perspective + Red team | 5 (individual) | ||

| Mid-semester | Presentation | 5 (group) | 10/9 |

| Report | 10 (individual) | ||

| Feedback | 5 (individual) | ||

| Project Hours | Key Experiments | 5 (group) | 11/20 |

| End of Semester | Presentation (Philosophy) | 5 (individual) | 12/4 |

| Presentation (Project) | 10 (group) | 12/2 | |

| Report | 20 (group) | 12/5 |

Groups: Both seminar and project based assignments will be done in groups. Groups will likely be capped at five people.

Equal Participation: All reports must include a breakdown of each teammate's contributions.

Project Pitch (5pts)

- Task, Environment, and Skills Definitions

- Minimal language covered and stretch goals

- Who do you think will be most interested?

- What will your project enable in the future?

- Paint me a picture of how/where/who will use this

Midsemester Presentation (5pts)

- Interactive demo of basic skills

- Example of successful composition

- Demonstration and analysis of failures

- Proposal of changes for final demo (including rescoping)

Final Presentation (10pts)

- Remind everyone of what you're doing with demo of compositional and interactive instructions

- Updated technical hypothesis if you were to start over

- Evidence (e.g. from demonstration or analysis) for your new hypothesis

Final Report (20pts)

- 12 Credit: Technical write-up and specification of system

- 12 Credit: Technical write-up of model design

- All: Updated design elements for the intermediate reports

- All: Literature Review of state of the field

- All: Discussion of key limitations to progress in this space

- All: Teach me something or try and change my mind about something from class

Relevant Related Courses

-

While no other course addresses the role of language, but they do teach about relevant techniques and tools that will also come up in this course.

- MLD Deep RL

Off policy RL is relevant, language is only discussed in CLIP - RI Film Making using AI and Robotics

Closest! Multimodality, text-to-image/video, manipulation - RI Deep Learning for Robotics

Human preferences (not language) - RI Human-Robot Interaction

Non-verbal communication and coordination - RI Learning for 3D Vision

Some discussion of language to 3D objects - RI Intro to Robot Learning

RL and Sim2Real - LTI Multimodal Machine Learning

General techniques and models for multimodality - LTI Advanced Multimodal Machine Learning

Papers from this course are relevant to Adv MMML - LTI Artificial Social Intelligence

"Robot" typically refers to avatars, lots of relevant material, but not articulated/embodied.

Course Policies

Late Assignments

- All teams have 5 late days, these are only applicable to reports (not demos).

COVID Details:

In the event a student tests positive for COVID-19, they will be invited to attend discussion virtually and will be expected to participate as usual. This includes participation points for raising their hands with questions/answers and submission of lab-notebooks. Note, that students who attend class while exhibiting symptoms will be told to leave and join virtually for the protection of all others present.

Accommodations for Students with Disabilities:

If you have a disability and have an accommodations letter from the Disability Resources office, we encourage you to discuss your accommodations and needs with the instructors as early in the semester as possible. We will work with you to ensure that accommodations are provided as appropriate. If you suspect that you may have a disability and would benefit from accommodations but are not yet registered with the Office of Disability Resources, we encourage you to contact them at access@andrew.cmu.edu.

FAQ

- Can we use our own platforms? Yes! What robots do you have? Also checkout AI Maker Space

- What about custom sensors and hardware? Same answer :)

- What about other simulators? Same answer :)

- What about Web Agents? If multimodal and multi-turn

- LTI Curriculum Categories? 12 Hour version can be counted for a Task and a Lab

- Do I /need/ simulator experience? No, but plan to spend some time getting the engineering setup

- Can I attend discussion without registering? It's best to register (6hrs) even if you've finished your classes, since I need to prioritize time, energy, and space on registered students. I'll try and update this once I have a room confirmed with the registrar and see how much space we have in the class.